Imagine you're running an online store. Every second, customers are placing orders, updating their profiles, and adding items to their carts. Now, you need to keep your search engine updated, send order confirmations, and sync data to your analytics dashboard.

Here's a question: How do you keep all these systems in sync?

Option 1: Process the entire database every few minutes to find what changed

Option 2: Only capture and process the data that actually changed

Which sounds smarter? Option 2, right?

That's exactly what Change Data Capture (CDC) does. Instead of scanning through millions of records to find updates, CDC tracks only what changed. Every new order, every profile update, every deletion, as it happens.

Think of it like this: Would you rather read an entire newspaper to find one new article, or get a notification showing you exactly what's new? CDC is that notification system for your database.

Change Data Capture is a technique that tracks three simple things in your database:

Inserts (new data added)

Updates (existing data modified)

Deletes (data removed)

Instead of asking "what does my database look like now?", CDC asks "what just changed?"

Let's say you have a customers table with 1 million records. In the last hour, only 50 customers updated their addresses.

Without CDC: You'd have to scan all 1 million records to find those 50 changes.

With CDC: You instantly know exactly which 50 records changed, what the old values were, and what the new values are.

When someone moves to a new city, CDC captures something like this:

{

"operation": "UPDATE",

"table": "customers",

"before": {

"customer_id": 12345,

"name": "Sarah Mitchell",

"city": "Austin"

},

"after": {

"customer_id": 12345,

"name": "Sarah Mitchell",

"city": "Seattle"

},

"timestamp": "2025-12-19T10:30:00Z"

}

You get the complete picture: what changed, when it changed, and both the old and new values.

You might be wondering, "Okay, but when would I actually need this?" Here are some real-world scenarios where CDC shines:

Maintaining your game progress across devices You're playing a mobile game like Candy Crush. You complete a level on your phone during lunch. Later that evening, you open the game on your tablet, and boom, your progress is right there. CDC captured your level completion and synced it across devices instantly.

Updating live sports scores in real-time You're watching a football match on ESPN's website. Every time a goal is scored, the live scoreboard updates within seconds. CDC captures the score change in the database and pushes it to millions of viewers simultaneously, no page refresh needed.

Tracking every transaction in your bank account Think of your bank statement. Every transaction (withdrawal, deposit, transfer) is logged with exact timestamps and amounts. If there's a dispute, the bank can show you exactly what happened and when. CDC creates this breadcrumb trail automatically for every data change.

Instantly unlocking Netflix after payment You just paid for your Netflix subscription. Within seconds, the "Payment Required" banner disappears and you can start watching shows. CDC captured your payment status change and notified the streaming service instantly, no manual refresh needed.

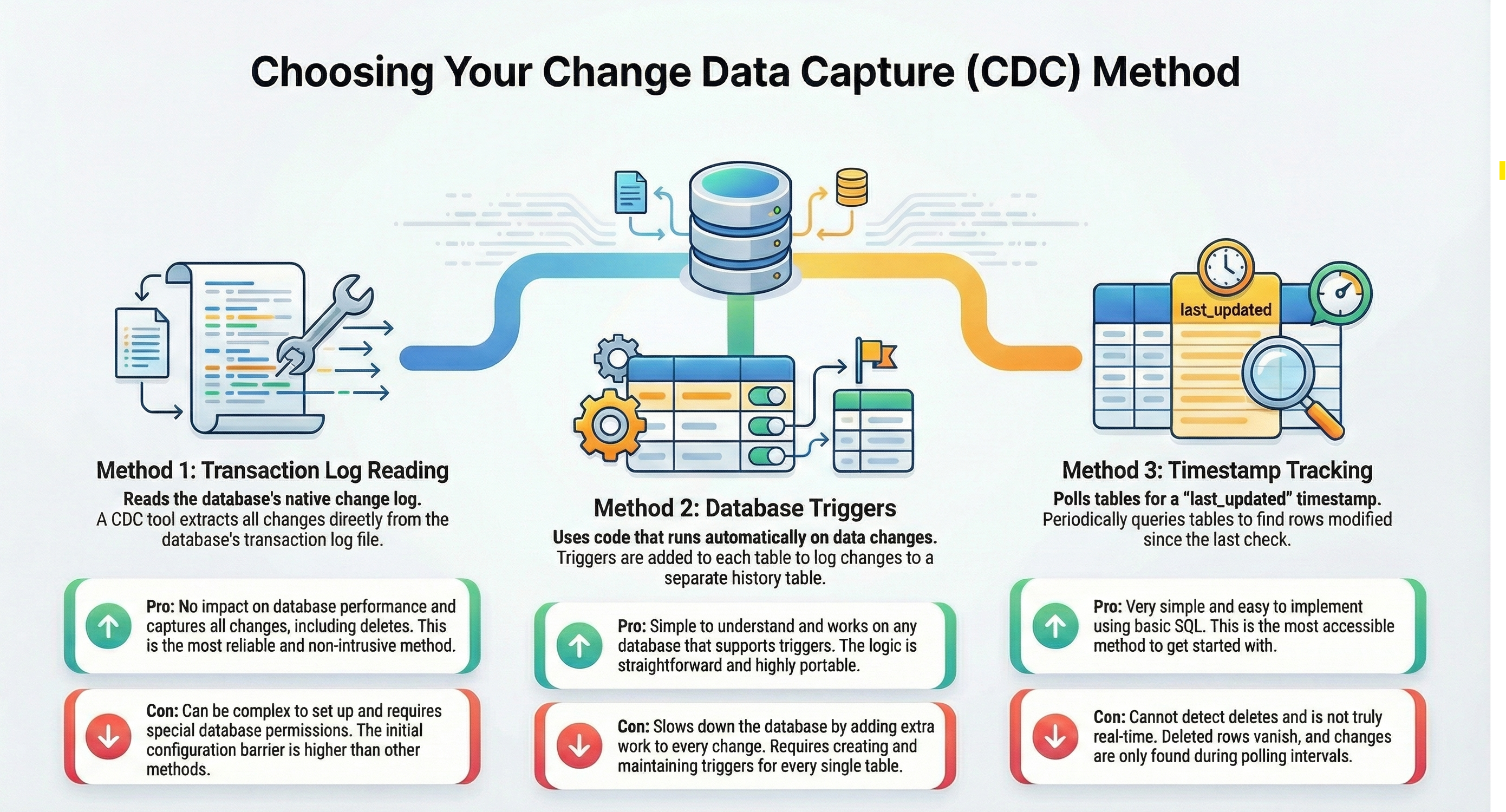

CDC isn't just one technique. There are different ways to capture changes, each with its own trade-offs. Let's look at the most common approaches:

Most modern cloud data platforms come with built-in CDC capabilities. Let's look at how two popular platforms handle it.

Snowflake uses something called Streams to track changes.

What's a Stream?

Think of it as a change tracker that sits on top of your table. It automatically records every insert, update, and delete without you doing anything special.

How it works:

-- Create a stream on your orders table

CREATE STREAM orders_stream ON TABLE orders;

-- Later, check what changed

SELECT * FROM orders_stream;

When you query the stream, you see only the rows that changed since you last checked. Snowflake even tells you what type of change occurred (insert, update, or delete).

Databricks offers Change Data Feed as part of Delta Lake.

What's Change Data Feed?

It's a feature you enable on Delta tables that tracks all changes automatically. Every modification gets logged, and you can query the history of changes anytime.

How it works:

-- Enable change data feed on a table

ALTER TABLE customers

SET TBLPROPERTIES (delta.enableChangeDataFeed = true);

-- Read all changes since a specific version

SELECT * FROM table_changes('customers', 2, 10);

You can see exactly what changed between any two versions of your table, making it perfect for auditing and incremental processing.

Change Data Capture is like having a notification system for your database. Instead of constantly checking what changed, CDC tells you exactly what happened, when it happened, and what the old and new values were.

Remember the key points:

CDC tracks inserts, updates, and deletes as they happen

Log-based CDC is the most efficient approach for production systems

Modern platforms like Snowflake and Databricks have built-in CDC features

Start simple with triggers or timestamps, then graduate to specialized tools as you scale

Not every use case needs CDC. Sometimes a simple batch job is better

Explore the power of Databricks Lakehouse, Delta tables, and modern data engineering practices to build reliable, scalable, and high-quality data pipelines."

A real-world Terraform war story where a “simple” Azure SQL deployment spirals into seven hard-earned lessons, covering deprecated providers, breaking changes, hidden Azure policies, and why cloud tutorials age fast. A practical, honest read for anyone learning Infrastructure as Code the hard way.

From Excel to Interactive Dashboard: A hands-on journey building a dynamic pricing optimizer. I started with manual calculations in Excel to prove I understood the math, then automated the analysis with a Python pricing engine, and finally created an interactive Streamlit dashboard.

Data doesn’t wait - and neither should your insights. This blog breaks down streaming vs batch processing and shows, step by step, how to process real-time data using Azure Databricks.

A curious moment while shopping on Amazon turns into a deep dive into how Rufus, Amazon’s AI assistant, uses Generative AI, RAG, and semantic search to deliver real-time, accurate answers. This blog breaks down the architecture behind conversational commerce in a simple, story-driven way.

This blog talks about Databricks’ Unity Catalog upgrades -like Governed Tags, Automated Data Classification, and ABAC which make data governance smarter, faster, and more automated.

Tired of boring images? Meet the 'Jai & Veeru' of AI! See how combining Claude and Nano Banana Pro creates mind-blowing results for comics, diagrams, and more.

An honest, first-person account of learning dynamic pricing through hands-on Excel analysis. I tackled a real CPG problem : Should FreshJuice implement different prices for weekdays vs weekends across 30 retail stores?

What I thought would be a simple RBAC implementation turned into a comprehensive lesson in Kubernetes deployment. Part 1: Fixing three critical deployment errors. Part 2: Implementing namespace-scoped RBAC security. Real terminal outputs and lessons learned included

This blog walks you through how Databricks Connect completely transforms PySpark development workflow by letting us run Databricks-backed Spark code directly from your local IDE. From setup to debugging to best practices this Blog covers it all.

This blog unpacks how brands like Amazon and Domino’s decide who gets which coupon and why. Learn how simple RFM metrics turn raw purchase data into smart, personalised loyalty offers.

Learn how Snowflake's Query Acceleration Service provides temporary compute bursts for heavy queries without upsizing. Per-second billing, automatic scaling.

A simple ETL job broke into a 5-hour Kubernetes DNS nightmare. This blog walks through the symptoms, the chase, and the surprisingly simple fix.

A data engineer started a large cluster for a short task and couldn’t stop it due to limited permissions, leaving it idle and causing unnecessary cloud costs. This highlights the need for proper access control and auto-termination.

Say goodbye to deployment headaches. Learn how Databricks Asset Bundles keep your pipelines consistent, reproducible, and stress-free—with real-world examples and practical tips for data engineers.

Tracking sensitive data across Snowflake gets overwhelming fast. Learn how object tagging solved my data governance challenges with automated masking, instant PII discovery, and effortless scaling. From manual spreadsheets to systematic control. A practical guide for data professionals.

My first hand experience learning the essential concepts of Dynamic pricing

Running data quality checks on retail sales distribution data

This blog explores my experience with cleaning datasets during the process of performing EDA for analyzing whether geographical attributes impact sales of beverages

Snowflake recommends 100–250 MB files for optimal loading, but why? What happens when you load one large file versus splitting it into smaller chunks? I tested this with real data, and the results were surprising. Click to discover how this simple change can drastically improve loading performance.

Master the bronze layer foundation of medallion architecture with COPY INTO - the command that handles incremental ingestion and schema evolution automatically. No more duplicate data, no more broken pipelines when new columns arrive. Your complete guide to production-ready raw data ingestion

Learn Git and GitHub step by step with this complete guide. From Git basics to branching, merging, push, pull, and resolving merge conflicts—this tutorial helps beginners and developers collaborate like pros.

Discover how data management, governance, and security work together—just like your favorite food delivery app. Learn why these three pillars turn raw data into trusted insights, ensuring trust, compliance, and business growth.

Beginner’s journey in AWS Data Engineering—building a retail data pipeline with S3, Glue, and Athena. Key lessons on permissions, data lakes, and data quality. A hands-on guide for tackling real-world retail datasets.

A simple request to automate Google feedback forms turned into a technical adventure. From API roadblocks to a smart Google Apps Script pivot, discover how we built a seamless system that cut form creation time from 20 minutes to just 2.

Step-by-step journey of setting up end-to-end AKS monitoring with dashboards, alerts, workbooks, and real-world validations on Azure Kubernetes Service.

My learning experience tracing how an app works when browser is refreshed

Demonstrates the power of AI assisted development to build an end-to-end application grounds up

A hands-on learning journey of building a login and sign-up system from scratch using React, Node.js, Express, and PostgreSQL. Covers real-world challenges, backend integration, password security, and key full-stack development lessons for beginners.

This is the first in a five-part series detailing my experience implementing advanced data engineering solutions with Databricks on Google Cloud Platform. The series covers schema evolution, incremental loading, and orchestration of a robust ELT pipeline.

Discover the 7 major stages of the data engineering lifecycle, from data collection to storage and analysis. Learn the key processes, tools, and best practices that ensure a seamless and efficient data flow, supporting scalable and reliable data systems.

This blog is troubleshooting adventure which navigates networking quirks, uncovers why cluster couldn’t reach PyPI, and find the real fix—without starting from scratch.

Explore query scanning can be optimized from 9.78 MB down to just 3.95 MB using table partitioning. And how to use partitioning, how to decide the right strategy, and the impact it can have on performance and costs.

Dive deeper into query design, optimization techniques, and practical takeaways for BigQuery users.

Wondering when to use a stored procedure vs. a function in SQL? This blog simplifies the differences and helps you choose the right tool for efficient database management and optimized queries.

Discover how BigQuery Omni and BigLake break down data silos, enabling seamless multi-cloud analytics and cost-efficient insights without data movement.

In this article we'll build a motivation towards learning computer vision by solving a real world problem by hand along with assistance with chatGPT

This blog explains how Apache Airflow orchestrates tasks like a conductor leading an orchestra, ensuring smooth and efficient workflow management. Using a fun Romeo and Juliet analogy, it shows how Airflow handles timing, dependencies, and errors.

The blog underscores how snapshots and Point-in-Time Restore (PITR) are essential for data protection, offering a universal, cost-effective solution with applications in disaster recovery, testing, and compliance.

The blog contains the journey of ChatGPT, and what are the limitations of ChatGPT, due to which Langchain came into the picture to overcome the limitations and help us to create applications that can solve our real-time queries

This blog simplifies the complex world of data management by exploring two pivotal concepts: Data Lakes and Data Warehouses.

demystifying the concepts of IaaS, PaaS, and SaaS with Microsoft Azure examples

Discover how Azure Data Factory serves as the ultimate tool for data professionals, simplifying and automating data processes

Revolutionizing e-commerce with Azure Cosmos DB, enhancing data management, personalizing recommendations, real-time responsiveness, and gaining valuable insights.

Highlights the benefits and applications of various NoSQL database types, illustrating how they have revolutionized data management for modern businesses.

This blog delves into the capabilities of Calendar Events Automation using App Script.

Dive into the fundamental concepts and phases of ETL, learning how to extract valuable data, transform it into actionable insights, and load it seamlessly into your systems.

An easy to follow guide prepared based on our experience with upskilling thousands of learners in Data Literacy

Teaching a Robot to Recognize Pastries with Neural Networks and artificial intelligence (AI)

Streamlining Storage Management for E-commerce Business by exploring Flat vs. Hierarchical Systems

Figuring out how Cloud help reduce the Total Cost of Ownership of the IT infrastructure

Understand the circumstances which force organizations to start thinking about migration their business to cloud